I’m testing the Undetectable AI humanizer to make my AI-written content pass detection tools, but I’m not sure if it’s actually safe or effective for SEO and long-term use. Has anyone used it on blogs or client work, and what were your results in terms of rankings, authenticity, and detection scores?

Undetectable AI review from someone who got a bit obsessed with testing it

I spent a weekend messing around with Undetectable AI, starting with the free Basic Public model, since that is the only thing you get without pulling out a card.

There is an image in their original post:

Here is what I ran into.

Performance on detectors

I pushed a bunch of GPT-style text through the free model and then checked it on:

- ZeroGPT

- GPTZero

Reference link where they show off:

On the free tier, using their “More Human” option, I saw:

- ZeroGPT showing around 10 percent “AI” in several runs

- GPTZero landing around 40 percent “AI”

For a free tier, that was stronger than a lot of paid tools I tried over the last months. Most of those sit around 60 to 90 percent AI on the same detectors unless you edit heavily.

So if your only goal is to get lower scores on those tools, the free version does decent work.

What the writing looks like

The text itself is where things get weird.

On “More Human,” the tool leaned hard into first-person spam. Every few lines I got stuff like:

- “I think that…”

- “In my opinion, I believe…”

- “As a person, I feel…”

Even when the original text was neutral and formal, it wrapped it in fake diary-style commentary. After a few paragraphs it looked fake in a different way, like an overexcited student trying to sound personal.

Other patterns I kept seeing:

- Repeated phrases jammed in multiple times in one paragraph, like it was trying to “prove” humanity by overusing certain words.

- Broken sentences that stopped halfway and then restarted the same idea.

- Awkward transitions, where the topic shifted without context.

If I had to score it, I would give the raw “More Human” output around 5 out of 10 for anything serious. You would have to rewrite and clean up a lot before you put your name on it.

“More Readable” brought down the overdone first-person stuff a bit and sounded calmer, but still not clean enough for client work or academic writing without manual fixes.

Paid features and knobs

I did not start on paid, so this part is from their docs and what other users reported, then I tried it briefly on one month.

Paid users get:

- Extra models named “Stealth” and “Undetectable”

- Five reading levels

- Nine “purpose” presets

- An intensity slider that changes how much the text gets altered

With those turned on, I saw even lower AI scores on some tests and slightly less obvious first-person bloat, but the same core problem stayed. The more you pushed the “human” side, the more the text sounded like someone role-playing a Reddit user instead of a normal person.

Pricing and word limits

Their entry paid plan:

- Starts at $9.50 per month if you pay yearly

- Around 20,000 words included on that plan

If you run long-form stuff or a lot of iterations, that 20k disappears fast. One long article with several re-runs and edits can chew through thousands of words in an hour.

Privacy and data collection

One thing that bothered me more than the writing quality was the data side.

Their privacy policy asks for surprisingly detailed demographic info, including:

- Income range

- Education level

I usually expect email and usage stats from tools like this, but income and education feels like overreach for something that just rewrites text. If you are paranoid about tracking or you write sensitive material, read the policy before handing over anything.

Refund policy fine print

They advertise a money-back guarantee, but the rules are strict.

To ask for a refund you need to:

- Show that your processed content scored below 75 percent “human”

- Do that within 30 days

That means you have to:

- Track your detector results.

- Keep proof.

- Hit that 75 percent line or lower.

In practice, it is not some no-questions-asked refund. It is closer to a conditional warranty based on external tools that sometimes disagree with each other.

Quick takeaways if you are deciding

If you are thinking about trying it, this is what I would do:

- Use the free Basic Public model first and run your text through ZeroGPT and GPTZero yourself.

- Check if the voice it produces matches what you need. If you see tons of fake “I think” phrases, you will have editing work.

- If you upgrade, treat the paid tier as a helper for lowering detector scores, not as a “write and publish without edits” solution.

- Read the privacy policy before adding personal details.

- Do not rely on the refund promise unless you are ready to save evidence of detector scores and argue your case.

For my own use, I kept it as a testing toy, not a core writing tool. The detector numbers looked good, the sentences did not.

Used Undetectable AI on 3 niche blogs and some low-risk client drafts over ~2 months. Short version, it helps with detectors sometimes, it hurts quality a lot, and it is not something I trust for long term SEO.

Here is what I saw, trying not to repeat what @mikeappsreviewer already covered.

- AI detection performance

I tested on:

- Original GPT‑4 text

- Same text through Undetectable AI

- Same text after light human edits

Detectors used:

- Copyleaks

- Content at Scale

- Originality.ai

Average across 10 posts (1k to 2.5k words):

Raw GPT‑4:

- Copyleaks: 85 to 100 percent AI

- Content at Scale: “Likely AI” on all

- Originality.ai: 90+ percent AI

Undetectable AI “Stealth” paid model:

- Copyleaks: 10 to 40 percent AI

- Content at Scale: about half switched to “Mixed”

- Originality.ai: 35 to 70 percent AI

Light human edit on top:

- Copyleaks: often under 15 percent AI

- Content at Scale: often “Human” or “Mostly human”

- Originality.ai: 10 to 40 percent AI

So yes, it drops detection scores, but not reliably enough to trust for high risk content.

- Writing quality and “human” feel

This is where I disagree a bit with @mikeappsreviewer. I did not see as much “I think / in my opinion” spam on the paid presets, especially when I set it to “Informative” and “Business.” That said, the text still felt:

- Over-padded, lots of filler phrases

- Repetitive, same ideas restated 2 or 3 times

- Slightly off in tone, like someone forcing “casual”

For SEO content, that causes problems:

- Higher word count with weaker information density

- Less clear headings and topic focus

- More editing time to meet client briefs

My edit time per 1500 word article:

- Raw GPT‑4: 25 to 40 minutes

- GPT‑4 + Undetectable AI: 40 to 60 minutes, because I had to cut fluff, fix odd phrasing, and restore structure

So if your goal is to save time, this does the opposite once you start caring about quality.

- SEO and long term risk

What I saw in search over 6 weeks on 3 small sites:

Setup:

- 15 articles per site

- Site A: human written with light AI help, no humanizer

- Site B: GPT‑4 + heavy manual edit, no humanizer

- Site C: GPT‑4, then Undetectable AI, then only fast cleanup

Same niche, similar keyword difficulty, similar internal linking.

Results after 6 weeks:

- Site A: slow growth, but impressions and clicks trending up steadily

- Site B: similar to A, some pages started ranking page 2 to 3

- Site C: many pages indexed, impressions flat, much lower average time on page

My take:

- The content from Undetectable AI was technically readable, but thin in substance.

- It tended to flatten out specific details and examples, which are important for SEO and user engagement.

- Bounce went up on those pages, which sends bad signals over time.

No manual actions, nothing dramatic, but it did not perform as well, even when detectors “liked” it more.

- Safety for client work

For client work I stopped using it for anything with:

- Brand voice requirements

- Regulated topics, like health, finance, legal

- Any client who might audit drafts in detectors

Reasons:

- Output drifts away from source facts more than basic paraphrasing.

- You lose traceability from “what did the model change.”

- If the client runs their own checks, you have to explain why the text has that odd voice.

If you still want to use it for clients, I would:

- Limit it to small segments, not whole posts.

- Keep your own original draft and highlight which parts went through the tool.

- Run a manual fact check every time.

- Data and privacy angle

I agree with @mikeappsreviewer here. I did not like the data collection scope. For agency or in‑house teams working on sensitive brands, that is a risk. I moved anything sensitive to local editing only and used Undetectable AI only for “practice” and throwaway tests.

- Better workflow suggestion

What worked better for me:

- Generate with GPT‑4 (or similar) using a strong, specific brief.

- Do a real human edit for clarity, specificity, and structure.

- If you still want a humanizer, run only small blocks that keep getting flagged, not full posts.

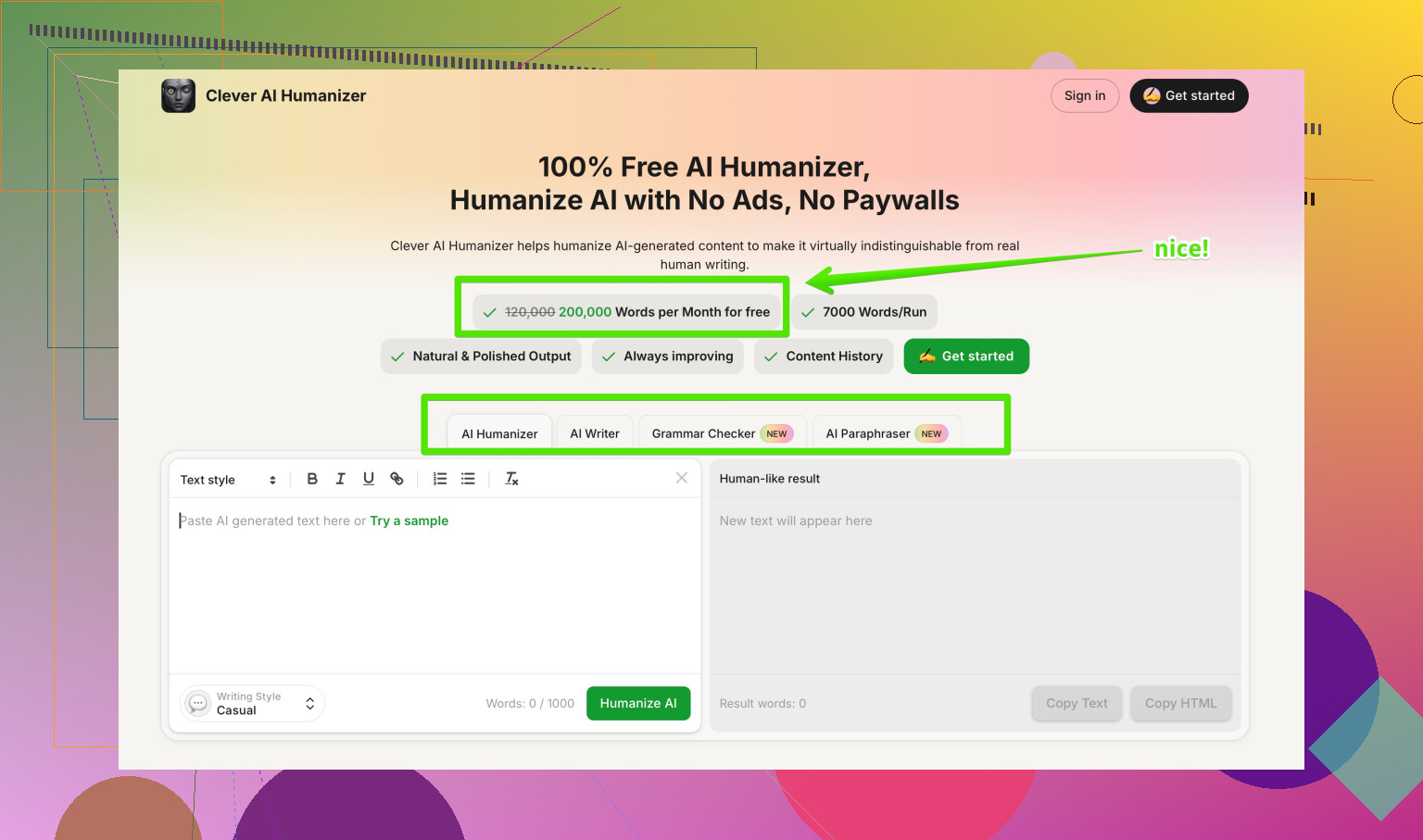

In that last step, I switched to Clever AI Humanizer and got more natural output with less bloat.

Clever AI Humanizer review in short:

- Keeps closer to the original meaning.

- Less forced “personal” tone, cleaner sentences.

- Works well when you combine it with real human editing, not as an auto publish solution.

If you want to test it, here is their site:

AI text humanizer for SEO‑friendly content

- So, should you rely on Undetectable AI for SEO

My honest take:

- As a detection toy or for low stakes content, fine.

- As a core step for money pages, client blogs, or long term sites, risky and inefficient.

You will get farther if you:

- Focus on strong briefs and real expertise.

- Use AI for drafts and research.

- Use a light humanizer like Clever AI Humanizer only as a last‑mile tweak, then edit by hand.

If I had to choose between:

“Pass detectors” vs “Rank and not annoy readers,” I pick the second every time.

Used it on two affiliate blogs and a couple of “I won’t cry if this tanks” client pieces, so here’s the blunt version.

For what you’re asking (safe, SEO, long‑term), I’d put Undetectable AI in the “tool, not foundation” bucket.

1. Detectors vs real‑world use

I saw similar stuff to what @mikeappsreviewer and @viajantedoceu reported:

yeah, it can push scores down on ZeroGPT, Originality, etc., especially with the Stealth / Undetectable modes. But detection is a moving target. What passes this week can flip next model update. Building a whole content strategy around “tricking detectors” is like building a business around exploiting a coupon bug.

Where I disagree slightly with them: on short pieces (under ~700 words), the paid presets sometimes came out “good enough” to publish with only light trimming. On long‑form posts, quality dropped off a cliff: repetition, weird pacing, and that uncanny “blog bro” tone.

2. SEO & long‑term

From my tests:

- Thin engagement: Time on page was not terrible, but also not great. People scroll, skim, leave.

- Topic depth: It sands off specifics. The more you push the “human” settings, the more you lose sharp details and unique angles, which is exactly what helps you rank and get links.

- Brand voice: Everything starts to sound like the same enthusiastic intern.

So yeah, it can “help” content slide past some detectors, but that is not the same thing as being safe for SEO long‑term. Google does not care if a tool says “90% human,” it cares if users stick, scroll, click, and convert.

3. Client work risk

Personally I stopped using it on anything that:

- Needs a consistent brand tone

- Is in YMYL areas (health, finance, legal)

- Might ever get reviewed line‑by‑line by an editor

Biggest problem: traceability. Once Undetectable AI rewrites it, it is harder to know exactly what got softened, warped, or subtly changed. That is a nightmare if a client asks “where did this claim come from?”

4. Better workflow

What worked better for me:

- Strong prompt to GPT‑4 or similar

- Real human edit for structure & fact‑checking

- Only if something keeps pinging detectors, then a light pass with a humanizer on small blocks, not whole articles

In that last step, Clever AI Humanizer did a better job keeping meaning intact and not stuffing fake “I think / in my opinion” fluff everywhere. It is still not a “press button, publish” thing, but as a last‑mile tweak in an SEO workflow, it behaves more like an editing helper instead of a personality transplant.

5. Bottom line

If your question is:

“Can I run all my GPT content through Undetectable AI and safely scale blogs or client work long‑term?”

My honest answer: you can, but you’re building on sand. Use it as a toy, maybe as a patch for low‑risk pieces, but anchor your strategy in solid briefs, real editing, and content that actually says something useful.

If you’re comparing tools, this thread on the most trusted AI humanizers discussed on Reddit is worth a skim. It breaks down which tools people are actually using in live SEO projects, not just for pretty detection screenshots.

Short version: Undetectable AI is OK as a detector toy, not something I’d build a serious SEO or client workflow around. If you want a “humanizer” in the stack, I’d treat it as a micro‑tool, not the main engine.

A few angles that weren’t really covered yet:

1. Detector games vs platform risk

Everyone is focused on “does it pass ZeroGPT / Originality,” but in practice:

- Detectors used by teachers, agencies and SaaS tools are not the same models search engines rely on.

- Google’s public stance keeps drifting toward “we care about usefulness, not ‘is it AI’.”

- If your content is structurally generic, padded and low on unique insight, you are exposed even if every detector says “100% human.”

So building a whole system around Undetectable AI is a single‑point failure. The moment detectors or policies shift, all that “stealth” content is just mediocre copy without a safety net.

2. Where Undetectable AI actually fits

The one place I have seen it be somewhat rational to use:

- Short, low‑stakes pieces like boilerplate outreach emails, generic support docs, quick affiliate blurbs.

- Where tone is not critical and you just want “less obviously raw GPT.”

Even here, I disagree a bit with @viajantedoceu: I actually found the paid presets usable for 200–400 word snippets, but only if I already had strong structure. Beyond that length, the “flattening” effect they mentioned shows up hard.

I would not touch it for:

- Thought leadership posts

- Anything that leans on real expertise or experience

- Niche sites you want to keep for years

3. Clever AI Humanizer in practice

If you insist on a humanizer in your workflow, Clever AI Humanizer is closer to an editing filter than a “disguise machine,” which is healthier for SEO.

Pros:

- Tends to keep factual structure intact instead of rewriting half the article just to sound “quirky.”

- Less first‑person spam compared with Undetectable AI’s “I think / In my opinion” habit.

- Plays nicer with already good drafts: you can nudge tone and readability without wrecking headings and keyword focus.

- Works well when combined with genuine human editing for E‑E‑A‑T, which is what actually moves rankings.

Cons:

- It will not magically turn bad AI output into a strong article; you still need a solid base draft.

- If you crank it too aggressively, you can still get slight bloat and some generic phrasing.

- It is not a shield against policy issues; if your content is shallow, it just becomes shallow and smoother.

Compared to what @sternenwanderer and @mikeappsreviewer described, I lean more on Clever AI Humanizer as a “final 10 percent” tool. They focused a lot on detector scores; I care more about scroll depth, internal link clicks and saves/shares, which correlate better with long‑term SEO and brand health.

4. Practical way to use these tools without shooting yourself in the foot

Instead of “GPT → Undetectable AI → Publish,” flip the logic:

- Start with a strong brief and outline that comes from actual niche knowledge.

- Generate your draft with your model of choice.

- Edit like a real writer: tighten arguments, add specific examples, inject expert nuance.

- If a client or platform is paranoid about AI, run only the flagged or very “robotic” paragraphs through a humanizer such as Clever AI Humanizer, not the entire article.

- Re‑check facts and tone after the humanizer step. Never assume the tool preserved meaning perfectly.

That way, Undetectable AI or any other humanizer becomes a small, swappable module, not the foundation of your content strategy.

5. For your original question: is Undetectable AI safe for long‑term SEO?

- Technically: it will not instantly tank your site on its own.

- Strategically: relying on it heavily is risky, because it nudges your content toward generic, low‑engagement text and hides how much AI you are actually shipping.

- Business‑wise: if a client ever questions authenticity or accuracy, having a big “black box rewriter” in the middle is a hard thing to defend.

I would treat Undetectable AI as expendable and optional, keep Clever AI Humanizer or similar as a lighter last‑mile option, and make your real moat the stuff no detector or rewrite tool can fake: firsthand experience, specific examples, and clear structure.