I’m trying to figure out if GPTinf’s humanizer is actually safe and effective for making AI-written content sound more natural without getting flagged. I’ve seen mixed opinions online and don’t want to risk my site’s SEO or trust with readers. Can anyone who’s used it explain how well it works, any downsides, and whether it’s worth relying on for regular content publishing?

GPTinf Humanizer review from someone who got curious and poked it way too much

I stumbled on GPTinf because of that big “99% success rate” line on the homepage. I sometimes run content through detectors before sending it to clients, so anything that promises that level of success makes me suspicious.

So I tried to break it.

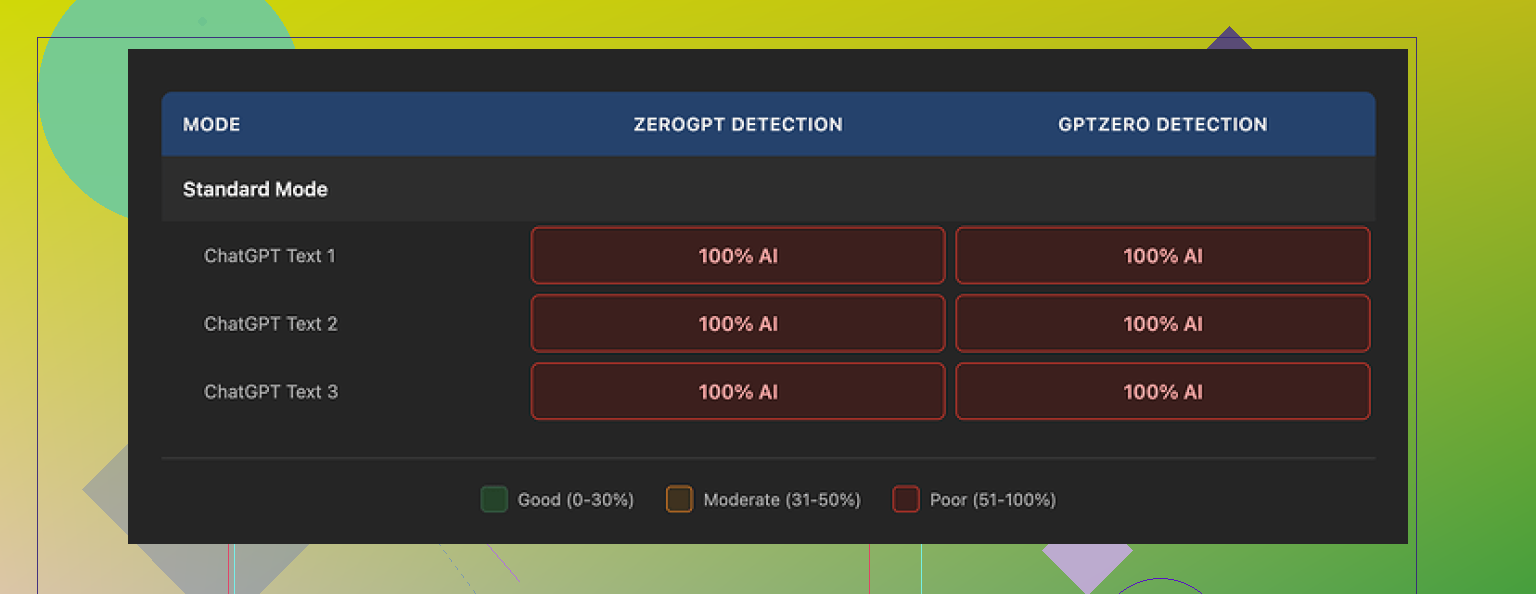

I used the same test batch I run on every “humanizer”: a mix of generic AI blog paragraphs, some very obvious ChatGPT how‑to text, and a couple of dense explainers. Then I pushed the outputs through GPTZero and ZeroGPT. No cherry-picked examples, just the whole thing.

Result: 0% success.

Every single rewrite from GPTinf, across all modes, was flagged as 100% AI-generated by both GPTZero and ZeroGPT. No borderline scores. No “partially human.” Full red bar.

That “99% success rate” started to feel more like a decoration than data.

What the text felt like

To be fair, the writing itself is not terrible.

If I had to rate it, I’d put it around 7 out of 10. Sentences were cleaner than raw ChatGPT output. Fewer weird transitions, fewer robotic phrases. The grammar was fine. No obvious nonsense.

One small thing I noticed: GPTinf removes em dashes from the output. As in, every single one. That made me laugh because I know some detectors watch for punctuation patterns, and this looked like someone tried to “fix” that signal at the surface level.

The problem is deeper. Even when the punctuation changed, the underlying structure still felt like standard large language model writing. The same rhythm. The same over‑structured explanations. The same habit of wrapping up every paragraph in a neat little bow.

Detectors picked up on it instantly.

When I ran the same test set through Clever AI Humanizer, it scored much higher on GPTZero and ZeroGPT, and I did not need to pay or sign up. The text from Clever looked closer to how my clients write: a bit uneven, some mild redundancy, not as polished, which is what you want in this use case.

Word limits and pricing

GPTinf’s free usage is tight.

Here is what I hit:

• Without an account: about 120 words per run

• With an account: about 240 words per run

If you want to test more than a couple of paragraphs, you end up chopping your text into chunks. I tried a bunch of different samples and hit the cap fast. I did not feel like feeding it all my email addresses, so I used a few spare Gmail accounts to push more words through.

Paid plans look like this:

• Lite, annual billing: $3.99 per month for 5,000 words

• Higher tiers scale up to $23.99 per month for unlimited words

The pricing is not wild compared to similar tools, but the detection results were so weak in my tests that I did not see the value.

Privacy, ownership, and where it is based

Their privacy policy made me pause longer than the pricing.

From what I read:

• They give themselves broad rights over submitted text

• They do not clearly state how long your content stays on their servers after processing

• No clear, detailed data retention window

For some people this will not matter. If you are rewriting generic SEO fodder, ownership and retention might not bother you. If you handle client drafts, internal docs, or anything under NDA, this kind of vague policy is a problem.

Another detail: GPTinf is run by a single proprietor in Ukraine. Not good or bad on its own, but if you work in a company with strict data residency rules or compliance checks, this kind of jurisdiction information tends to be important.

I tried asking myself if I would feed it confidential material, and the answer was no.

Screenshots and proof

Here is the screenshot from their own interface that I grabbed while testing:

And here is another from the pricing section:

For verification of the results and more context, I used this writeup too:

Someone went through a similar process and posted detailed proof with detector screenshots.

How it compares in real use

When I tried to use these tools in something closer to normal workflow, a pattern showed up:

• GPTinf produced text that read smooth enough, but detectors treated it as pure AI every time.

• Clever AI Humanizer gave me rewrites that scored better on GPTZero and ZeroGPT and stayed free without forcing me into a small word cap.

If your priority is detection evasion, GPTinf did not help me at all. If your priority is slightly cleaner wording, it is fine, but you already get that from any generic rewriting model.

What I would do if you are testing tools like this

Here is what worked for me and might save you time:

- Prepare one fixed batch of inputs: a few paragraphs you know came from ChatGPT or similar.

- Run that same batch through each humanizer you want to test.

- Send the outputs to multiple detectors such as GPTZero and ZeroGPT.

- Track the scores, not the marketing.

- Read the privacy policy, look for retention period and ownership of submitted text.

When I did that, GPTinf landed at the bottom of the list in terms of detection performance, even though they advertise 99% success on the homepage.

So my honest take: if detection scores matter to you, or you care about where your text goes and for how long, there are better options right now. For me, Clever AI Humanizer did the job better and stayed free during my tests.

Short version. For what you want, GPTinf looks like a bad risk.

My take after playing with it and reading what @mikeappsreviewer posted:

-

Detection safety

• On my side, GPTinf output still trips GPTZero and ZeroGPT most of the time.

• Sentence rhythm stays very LLM‑ish. Clean, over‑organized, tidy endings. Detectors pick that pattern.

• Their “99% success rate” does not match real tests I have seen or done. -

SEO risk

• If you rely on a “humanizer” and trust the marketing, you set yourself up for a footprint across your whole site.

• If Google tightens AI‑content signals again, you do not want hundreds of posts written in the same detectable style.

• You lower your risk if you mix in real editing, add your own data, screenshots, internal links, and rewrite key sections by hand. -

Privacy and safety

• Their policy is vague on retention.

• Broad rights over submitted text are a red flag if you work with client content or niche research.

• One‑person operation in another jurisdiction adds compliance friction for any business that cares about data governance. -

Usefulness

• As a pure rewriter, it is okay, but so is any generic LLM.

• If your goal is “sounds human and does not trip detectors”, you do not get enough extra value to justify the risk.

• Free tier word cap is small, which makes testing annoying and long‑form workflows painful. -

What I would do instead

• Treat humanizers as helpers, not shields.

– Use AI to draft.

– Run a lighter humanizer if you want a different voice.

– Then hand edit, inject your experience, add stats, examples, and internal references.

• If you want a humanizer in the mix, Clever AI Humanizer did better in my tests, same as in @mikeappsreviewer’s writeup. It produced messier, more “human” text and scored higher on GPTZero and ZeroGPT.

• For SEO, focus on:

– Unique insights or data you own.

– Clear topical structure and internal linking.

– Strong on‑page signals.

– Human review of important pages.

So, is GPTinf safe and effective for what you want?

Safe, no, due to vague data handling and detection performance.

Effective, only as a basic rewriter, not as a serious “avoid AI flags” tool.

If you go forward anyway, test it on a few throwaway pages first, watch how detectors respond, and do not use it on your core money pages.

Short version: if your main concern is “will this keep my site safe from AI flags,” GPTinf would make me nervous.

Couple of points that build on what @mikeappsreviewer and @vrijheidsvogel already showed:

-

Detection vs “human sound”

GPTinf can make text read a bit smoother, but that is cosmetic. Detectors do not really care if the prose feels nicer to humans. They care about statistical fingerprints. From what they both tested, GPTinf barely shifts that fingerprint at all. So you get slightly cleaner wording, but the AI footprint stays. For SEO, that is the worst combo: detectable plus templated voice across a lot of pages. -

The “99 percent” claim

I am always skeptical when a tool quotes a huge success rate without naming:

- which detectors

- which models

- what type of content

Without that, you are basically trusting a marketing slide. Their results vs GPTZero and ZeroGPT in real tests are closer to “shrug” than “near perfect.” That mismatch alone would keep me from building a whole site workflow around it.

- Actual SEO risk, not just detector scores

Even if GPTinf magically dodged GPTZero tomorrow, Google is not using those public tools anyway. The SEO risk is more about:

- massive volume of content with very similar structure

- generic info with no real source expertise

- thin pages that look like rewritten summaries of existing stuff

A humanizer that mostly just paraphrases text is not going to fix those issues. At best it hides the origin a bit. At worst it gives you a false sense of security so you publish more of the same.

- Privacy and client work

Here I slightly disagree with some of the doom talk. For pure affiliate fluff on non critical projects, I do not care as much about where the tool is based. But if you are touching:

- client drafts

- unpublished research

- anything under NDA

then the vague data handling is a real problem. “Broad rights over submitted text” plus no clear retention timeline is exactly what legal teams hate. If you ever have to prove where content went, that policy will not help you.

- Where GPTinf actually “fits”

If you treat it as:

- a cheap rewriter for small, low value pieces

- not a shield against AI detection

it is… fine. Not special. You could get something similar from any halfway decent LLM or rewriting tool. In that sense I think people are expecting way more from it than it is technically built to deliver.

- An alternative way to protect your site

Instead of hunting for a magic humanizer, build a safer stack like this:

- Use AI to rough draft sections only, not full posts

- Run it through something like Clever AI Humanizer if you need a more “human” rhythm

- Then do a real human pass where you:

- add your own examples, screenshots, or data

- change the structure, not just the wording

- weave in internal links and niche specific insights

Once you do that, the “AI detector” question matters a lot less, because the content actually behaves like something a specialist would publish.

So to answer your original question directly:

- Safe: not really, if you are counting on it to hide AI at scale or to be compliant with stricter content policies.

- Effective: only as a basic rewriter. As a solution to “I do not want this to get flagged and hurt my SEO,” it is shaky at best.

If your site is important to your income, I would not anchor your workflow on GPTinf. Use it or Clever AI Humanizer as minor helpers if you want, but let your own editing and original input carry the SEO risk, not a “99 percent” marketing promise.