I’m working on a project and used an online AI content detector to check my text for originality. The results seemed inconsistent and flagged some of my human-written sections as AI-generated. Has anyone else experienced this problem? I’m looking for advice on how to get reliable results or which tools are most accurate.

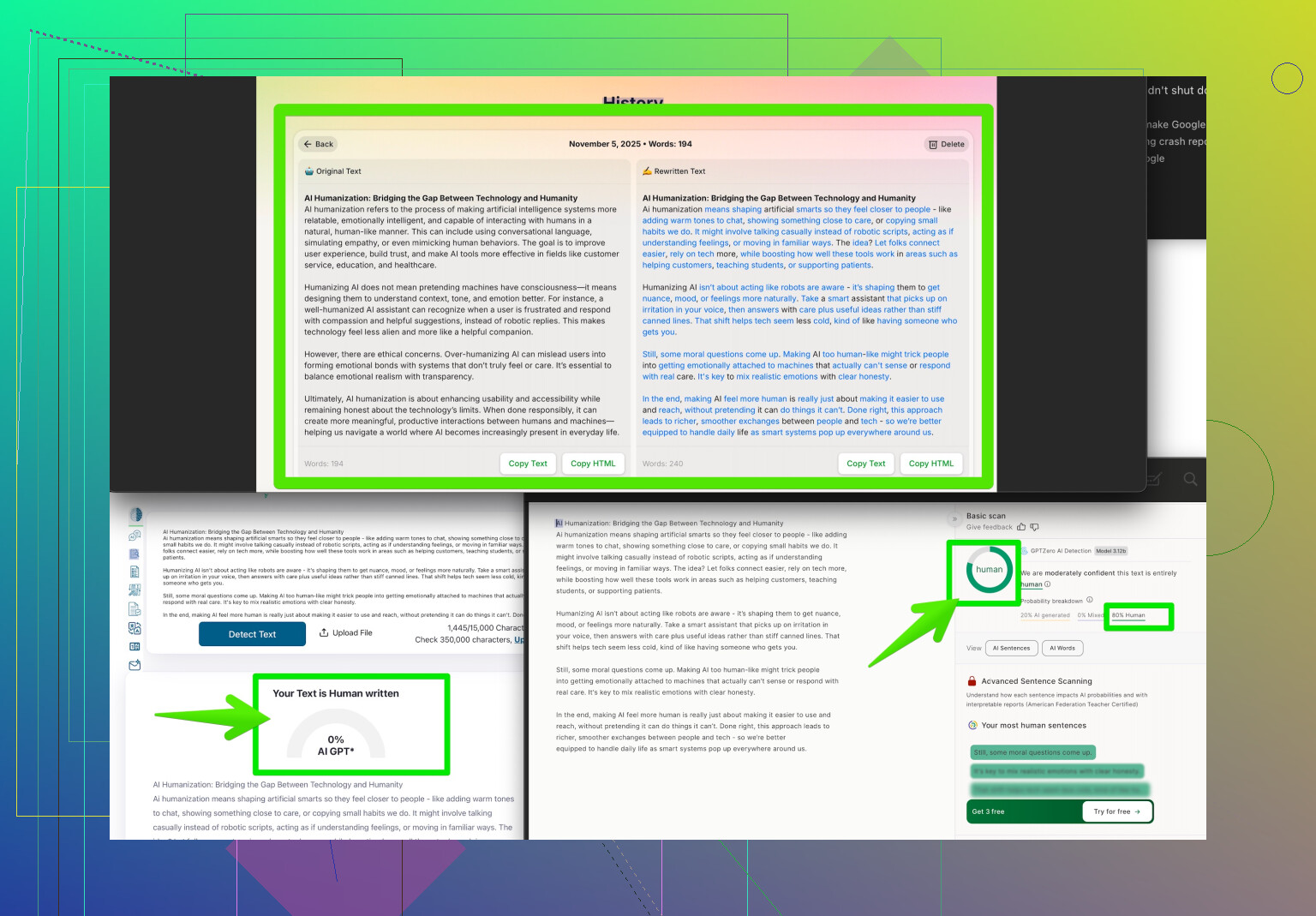

Yep, those AI content detectors can be wildly inconsistent—especially with nuanced or creative human writing. I’ve had a few personal blog posts, 100% written by me and full of typos and odd tangents, get flagged as AI. Meanwhile, machine-generated text sometimes breezes through without setting off any alarms. From what I’ve seen, most of these tools look for patterns, repetition, and formality that “usually” suggest AI, but creativity or a certain writing style can throw them off. For actual projects that require showing your content is human, you might wanna check out the Clever AI Humanizer. It’s designed to make AI-generated text appear more organic and less like, well, a bot wrote it. Their site—make your content undetectable to AI scanners—gives you options to transform text while keeping natural flow, which might help smooth out those false positives if you’re mixing AI and original work. Ultimately, though, these detectors shouldn’t be the final authority. Real originality and context can’t always be measured by algorithms—at least not yet!

AI content detectors? Yeah, they’re kinda like airport security machines—sometimes catching your grandmother’s banana bread as a dangerous weapon while letting ten gallons of shampoo through. I’ve had several docs flagged as “highly likely AI” when they were literally just my late-night ramblings, typos, and all. The inconsistency drives me nuts, especially when AI-written stuff, like those bland Wikipedia paraphrases, sneaks by as “totally human.”

It’s funny, but I kinda disagree with @byteguru’s optimism about making everything “undetectable.” Even if you use tools like Clever AI Humanizer (which I’ll admit can help), there isn’t a silver bullet. Most of these detectors just scan for stuff like repetition, formal structure, and low randomness—aka the opposite of how I write after two Red Bulls. Sometimes making things more casual, tossing in slang, or even purposely inserting weird sentence structures can help, but then your writing just gets… weird. Not always better.

Bottom line: these detectors aren’t that accurate—especially for stuff that doesn’t fit the “standard essay” mold. If you’re being graded or need a pass from a super picky checker, mix things up, sure, maybe give Clever AI Humanizer a try. But don’t stress over every false positive. The tech just isn’t there yet.

And if you want more tricks from folks wrestling with the same headache, check out Reddit’s best Humanize-AI tips and real user experiences. The struggle is real!